Sustainable AI,

Real Impact

Sustainable AI has achieved notable efficiency gains, such as Google Gemini’s 33-fold reduction in energy per text prompt to just 0.24 Wh, alongside smaller models and renewable-powered data centres that lower per-inference emissions. However, its real impact remains limited: AI’s explosive growth has driven data-centre electricity demand up 165% and emissions projected to triple by 2030, equating to Italy’s total output or New York City’s annual footprint. Verifiable net reductions in overall AI emissions are scarce, with one 2026 analysis finding no material evidence of generative AI curbing planetary emissions despite hype. Efficiency helps scalability but cannot offset surging usage without stricter governance and systemic change.

Reliable, green, and efficient.

"

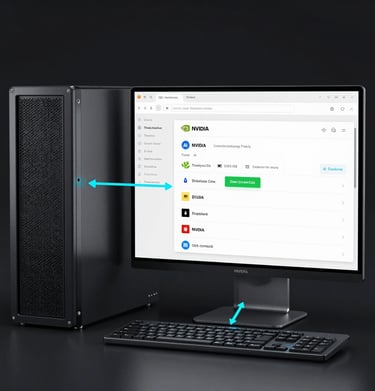

Gallery

Snapshots of our green-powered AI data center in action.

Green Life Data’s eco-friendly AI hosting cut our energy costs and boosted performance.

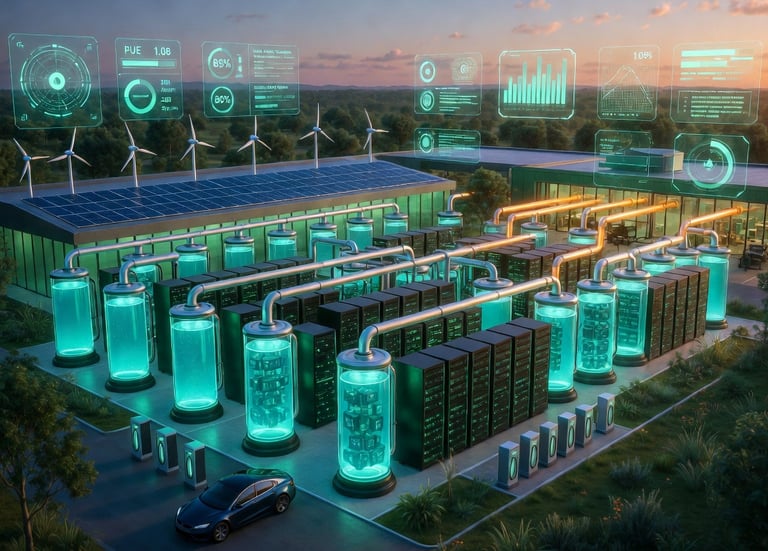

Advanced Projects

Showcasing our sustainable AI infrastructure milestones.

AI Pods

An AI Pod (sometimes stylized as AI POD or GPU POD, short for "Point of Delivery") is a modular, pre-integrated, self-contained computing unit optimized specifically for artificial intelligence (AI) and high-performance computing (HPC) workloads. It functions as a standardized "building block" or mini data center that combines high-density GPU accelerators, high-speed interconnects, storage, networking, and AI-optimized software into a plug-and-play system. Multiple AI Pods can be clustered together to form larger-scale infrastructure, effectively acting as one unified high-performance server or even an entire AI "factory."

This design contrasts sharply with traditional servers or general-purpose data centers. Vendors such as Cisco, ASUS, NVIDIA, and HPE have popularized the concept with validated, turnkey solutions. The core idea is to deliver massive parallel processing power for tasks like training large language models, running inference at scale, fine-tuning (e.g., retrieval-augmented generation or RAG), agentic AI workflows, simulations, and data analytics—without the custom engineering typically required for AI infrastructure.

Key Components of an AI Pod

Compute: Dense arrays of high-end GPUs (e.g., NVIDIA Blackwell, Rubin, or GB200 series; AMD equivalents) paired with CPUs (Intel Xeon or AMD EPYC). These handle the parallel matrix math essential for neural networks. NVIDIA's DGX systems, for instance, scale to racks with 8+ GPUs per node, supporting liquid cooling for extreme densities.

Networking: Ultra-low-latency, high-bandwidth fabrics like NVIDIA NVLink, InfiniBand, or 100/200/400 GbE Ethernet (via Cisco Nexus or NVIDIA Quantum switches). This ensures efficient data movement across thousands of GPUs without bottlenecks.

Storage: High-performance, parallel file systems (e.g., VAST Data, WEKA, DDN, or NetApp) with GPUDirect Storage for direct GPU-to-storage access, enabling 10× faster data pipelines than traditional setups.

Software & Management: Full-stack integration including NVIDIA AI Enterprise, CUDA/ROCm libraries, frameworks like PyTorch/TensorFlow, orchestration (Kubernetes, Slurm), and management tools (Cisco Intersight or NVIDIA Mission Control). Security layers (e.g., Cisco AI Defense) and automation (Ansible/Terraform) are built-in.

Physical Design: Often rack-based or containerized (1-2 MW modular units), supporting air or advanced liquid cooling to handle 40+ kW per rack—far beyond traditional servers.

Green Tech

Green Tech (short for Green Technology or Sustainable Technology) refers to innovative, environmentally responsible solutions designed to minimize energy consumption, reduce carbon emissions, conserve water, and lower overall ecological impact while maintaining or improving performance. In the context of data centers — especially high-density AI Pods — Green Tech focuses on the full lifecycle: construction, operations, and decommissioning. Data centers are among the most energy-intensive facilities on Earth, already consuming roughly 2% of global electricity (projected to grow sharply with AI workloads). Without Green Tech, this could double or triple by 2030, driving up costs, straining grids, and increasing emissions and water use. Green Tech transforms data centers from energy hogs into efficient, sustainable “AI factories” by tackling the two biggest culprits: cooling (often 30–50% of total power) and overall energy waste.

Innovative Cooling Solutions: The Heart of Green Tech for Data Centers

Traditional air cooling (fans + CRAC units) is outdated for modern AI workloads. High-performance GPUs in AI Pods can generate 40–250+ kW per rack — far beyond what air can handle efficiently. This leads to hotspots, throttling, high Power Usage Effectiveness (PUE — total facility energy / IT equipment energy; ideal is ~1.0), massive water evaporation in chillers, and huge electricity bills.

Key innovative cooling technologies in 2025–2026:

Direct-to-Chip Liquid Cooling (DLC / Cold Plates) Coolant (water-glycol or dielectric fluid) flows through plates directly attached to CPUs/GPUs. Up to 15% better energy efficiency than air cooling. Enables denser server packing, reduces fan noise/power, and supports AI’s extreme thermal loads. Widely adopted in NVIDIA DGX, Cisco AI PODs, and hyperscale facilities.

Immersion Cooling (Single-Phase or Two-Phase) Servers are fully submerged in non-conductive dielectric fluid (or oil). Heat is transferred directly to the liquid, which is then cooled via heat exchangers.

Up to 94% cooling-energy savings versus traditional air systems.

Supports ultra-high densities (>250 kW/rack) with zero server fans.

Closed-loop systems are often waterless, slashing water use by up to 90% (critical as a 1 MW data center can consume millions of gallons of water yearly for evaporative cooling).

Bonus: Waste heat captured at higher temperatures (up to 60–70°C) for easy reuse. Microsoft’s 2025 lifecycle analysis showed immersion and cold-plate technologies cut greenhouse-gas emissions 15–21% from “cradle to grave.”

Advanced Hybrid & Smart Systems

Rear-door heat exchangers + free cooling (using outside air when cold).

AI-driven thermal management: Sensors + machine learning dynamically adjust flow, predict hotspots, and optimize based on workload.

Phase-change materials and thermosyphon loops for passive, ultra-efficient heat transfer.

Panasonic and other vendors launched dedicated 2026 AI liquid-cooling lines with vertically integrated supply chains.

These solutions are now standard in next-gen AI Pods (ASUS, NVIDIA SuperPOD, Cisco Validated Designs), allowing racks that were previously impossible with air cooling.

Energy-Saving Solutions Complementing Cooling

Cooling is only half the story. Green Tech also targets power generation, distribution, and usage:

On-Site Renewables & Storage: Solar arrays, wind turbines, battery systems, geothermal, small modular reactors (SMRs), and hydrogen fuel cells. Many hyperscalers aim for 100% renewable matching or even carbon-negative operations by 2030.

Waste Heat Reuse: Captured heat from liquid cooling warms nearby buildings, greenhouses, or district heating networks — turning a liability into revenue.

AI-Optimized Operations: Predictive algorithms schedule workloads to low-price/low-carbon grid times, right-size servers, and minimize idle power. Emerging Power Compute Effectiveness (PCE) metrics go beyond PUE to measure real compute-per-watt.

Efficient Hardware & Circular Design: High-efficiency power supplies (Titanium-rated), low-power chips, modular prefabricated pods, and server reuse/recycling to cut e-waste and Scope 3 emissions.

Green Construction: Low-carbon concrete, recycled materials, and water-conscious site planning.

How Green Tech Dramatically Improves a Data Center

Integrating these solutions (especially in AI Pods) delivers transformative benefits:

Massive Energy & Cost Reduction Liquid/immersion cooling alone can cut total facility energy by 30–40% or more. PUE drops from 1.5–2.0 (legacy) to 1.1 or below. OPEX savings are huge — energy is often 30–50% of a data center’s lifetime costs. In 2026, this is essential as AI drives power densities skyward.

Superior Performance & Density for AI Workloads AI Pods can pack far more GPUs without thermal throttling, enabling faster model training/inference in smaller footprints. Hardware lifespan extends because components run cooler and more stably.

Environmental & Regulatory Wins

CO₂ emissions drop sharply (data centers already contribute ~0.9% of global energy-related GHGs).

Water consumption plummets with closed-loop systems — critical in drought-prone areas.

Meets or exceeds global mandates (EU Energy Efficiency Directive, U.S. state rules, corporate ESG targets). Hyperscalers like Microsoft, Google, and Meta use these to hit 2030 carbon-neutral goals.

Scalability, Reliability & New Revenue Streams Modular Green AI Pods deploy faster and expand easily. Heat reuse generates extra income. Facilities become more resilient to grid strain and attract tenants/investors who prioritize sustainability.

Overall ROI & Future-Proofing Lower TCO, higher utilization, and compliance reduce risk. In 2026 trends, data centers without liquid cooling and renewables risk being uncompetitive or even unbuildable due to power/water constraints.

Contact

Head Office

Green Life Enterprises LLC

7175 E. Camelback Road

Suite 707

Scottsdale, Arizona 85251

greenlifedatacenters@gmail.com

+1-813-220-0001

© 2026. All rights reserved.

Canadian Office

Green Life Enterprises LLC

3142 Nicholson Ave

Suite 10

New Waterford, Nova Scotia B1H 1N8