AI in Mental Health and Addiction: Promise, Pitfalls, and the Path Forward

Neil L. Rideout

4/23/20265 min read

AI in Mental Health and Addiction: Promise, Pitfalls, and the Path Forward

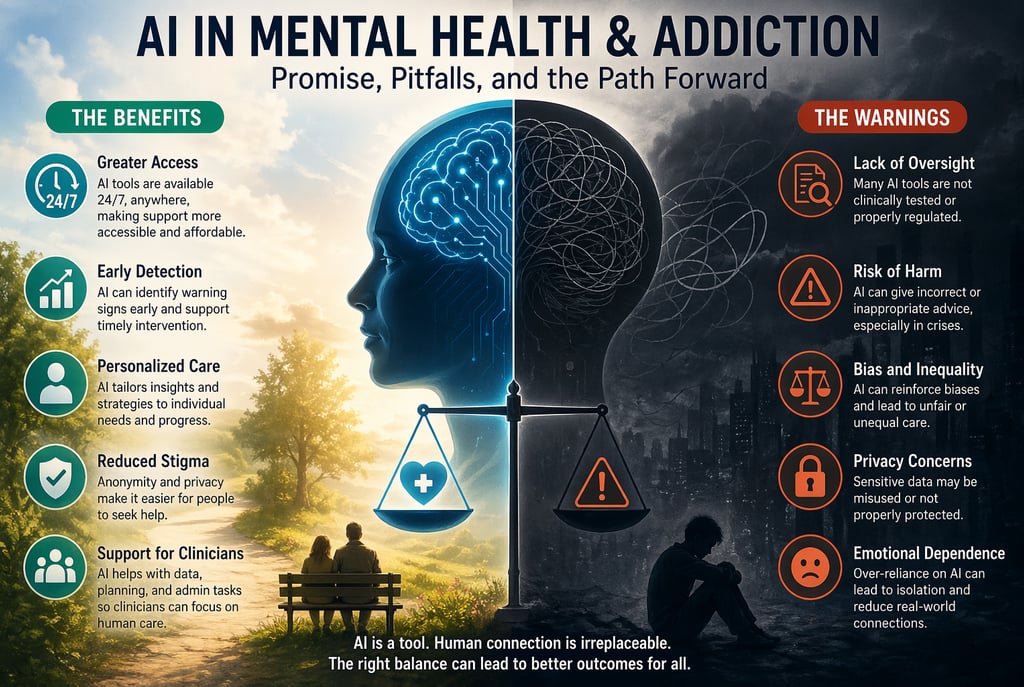

Artificial intelligence (AI) is rapidly transforming nearly every aspect of healthcare, and mental health and addiction treatment are no exceptions. Around the world, mental health systems are under immense pressure, with long wait times, high costs, and shortages of trained professionals leaving many people without adequate support. In this context, AI is emerging as a potential solution to bridge these gaps. From therapy chatbots to predictive tools that assess relapse risk, AI is already being used to expand access and reshape how care is delivered. However, while the technology offers meaningful benefits, it also introduces serious risks that must be carefully considered.

One of the most significant advantages of AI in mental health and addiction care is its ability to increase accessibility. Unlike traditional therapy, which often requires appointments, travel, and financial resources, AI-powered tools are available at any time and from virtually anywhere. This makes them especially valuable for individuals in rural or underserved communities, where access to care may be limited or nonexistent. For someone experiencing anxiety, depression, or substance cravings, the ability to access immediate support can make a critical difference. AI systems can provide real-time responses, offering coping strategies or simply a space to express thoughts without judgment, which can be particularly helpful during moments of distress.

Another major benefit lies in early detection and intervention. AI systems are capable of analyzing large amounts of data, including behavioral patterns, speech, and even subtle changes in communication. These systems can identify warning signs of mental health decline or potential relapse in addiction recovery before they become severe. Early intervention is one of the most effective ways to improve outcomes in both mental health and addiction treatment, and AI has the potential to make this process faster and more precise. By recognizing patterns that may not be obvious to human observers, AI can prompt timely support and potentially prevent crises.

Personalization is another area where AI shows promise. Traditional approaches to mental health and addiction treatment often rely on generalized methods, but AI can tailor recommendations to the individual. By learning from user behavior, preferences, and history, AI systems can provide customized coping strategies, recovery plans, and feedback. This level of personalization can increase engagement and make treatment feel more relevant and effective. For individuals in addiction recovery, personalized insights into triggers and behaviors can be especially valuable in maintaining long-term progress.

AI also has the potential to reduce stigma associated with seeking help. Many people hesitate to reach out for mental health support due to fear of judgment or shame, particularly in cases involving addiction. Interacting with an AI system can feel less intimidating, offering a sense of anonymity and privacy that encourages openness. For some individuals, this can serve as a first step toward seeking further help, lowering the barrier to entry into the mental health care system.

In addition to supporting individuals directly, AI can assist clinicians and healthcare systems. It can automate administrative tasks, analyze patient data, and help inform treatment decisions, allowing professionals to focus more on direct patient care. In this way, AI has the potential to enhance the efficiency and effectiveness of existing mental health services rather than replace them.

Despite these benefits, the risks associated with AI in mental health and addiction care are substantial. One of the most pressing concerns is the lack of clinical accuracy and oversight. Many AI tools are not rigorously tested or regulated, and some explicitly state that they are not intended to diagnose or treat medical conditions. This creates a situation where users may rely on systems that are not equipped to provide safe or appropriate guidance. In high-risk situations, such as suicidal ideation or severe addiction relapse, the limitations of AI can have serious consequences.

Another concern is the potential for harmful or misleading responses. AI systems generate outputs based on patterns in data rather than true understanding, which means they can sometimes produce inappropriate or even dangerous suggestions. In the context of mental health, this could involve reinforcing negative thought patterns or failing to respond adequately to a crisis. In addiction scenarios, it might mean overlooking signs of relapse or offering ineffective coping strategies. These limitations highlight the importance of human oversight and the dangers of relying solely on automated systems for care.

Bias and inequality also present significant challenges. AI systems are trained on existing data, which may contain biases related to race, gender, or socioeconomic status. As a result, these systems can unintentionally perpetuate discrimination or provide unequal quality of care. In mental health, where trust and sensitivity are essential, such biases can undermine the effectiveness of treatment and further marginalize vulnerable populations.

Privacy and data security are additional areas of concern. Mental health and addiction data are highly sensitive, and the use of AI often involves collecting and analyzing personal information. Questions about how this data is stored, who has access to it, and how it is used remain critical. Inadequate protections or unclear policies can put users at risk, potentially exposing deeply personal information.

There is also the issue of emotional dependence on AI systems. As these tools become more sophisticated and conversational, some users may begin to rely on them as substitutes for human relationships. This can lead to increased isolation and a reduced likelihood of seeking professional help. In some cases, excessive reliance on AI has been associated with worsening mental health outcomes, particularly when individuals withdraw from real-world support systems.

Perhaps the most fundamental limitation of AI in this field is the absence of genuine human connection. Mental health care is not only about providing information or strategies; it is also about empathy, understanding, and trust. Human therapists bring emotional depth and lived experience that AI cannot replicate. In addiction recovery especially, relationships with counselors, peers, and support networks often play a central role in healing. While AI can support these processes, it cannot replace the human element that is essential to effective care.

In the context of addiction, AI represents a particularly complex double-edged sword. On one hand, it offers tools for monitoring behavior, predicting relapse, and providing immediate support during moments of vulnerability. On the other hand, it raises concerns about misinterpretation, lack of crisis response, and over-reliance on automated systems. These contrasting possibilities make it clear that AI must be used with caution in addiction treatment.

Ultimately, the future of AI in mental health and addiction care will depend on how it is integrated into existing systems. Responsible use requires clear boundaries, transparency, and a commitment to safety. AI should be seen as a supplement to professional care rather than a replacement. Ensuring proper regulation, protecting user data, and maintaining human oversight will be essential in minimizing risks.

AI has the potential to make mental health and addiction support more accessible, personalized, and responsive. At the same time, it carries significant ethical and practical challenges that cannot be ignored. The goal should not be to replace human care, but to enhance it. When used thoughtfully, AI can be a valuable tool in addressing some of the most pressing challenges in mental health and addiction. When used carelessly, it risks deepening the very problems it aims to solve.

Contact

Head Office

Green Life Enterprises LLC

7175 E. Camelback Road

Suite 707

Scottsdale, Arizona 85251

greenlifedatacenters@gmail.com

+1-813-220-0001

© 2026. All rights reserved.

Canadian Office

Green Life Enterprises LLC

3142 Nicholson Ave

Suite 10

New Waterford, Nova Scotia B1H 1N8